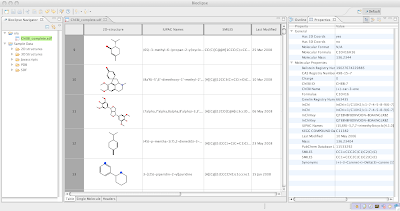

I decided to test the performance of Bioclipse 2 (current release 2.0.0RC5) for working with large structural files (SDFiles). I first loaded in the complete ChEBI (Chemical Entities of Biological Interest) which consisted of 13.486 chemical structures and has a file size of 54 MB on disk. This was very fast, Bioclipse indexed and opened the file in less than 4 seconds, and then continued to parse the properties in the background for another 4 seconds (but during this time it is possible to browse and work with the structures). The MolTable editor was very responsive and scrolls nicely.

To really push Bioclipse, a test file of the first 225.000 compounds in Pubchem were concatenated, resulting in an SDFile of size 1.1 Gb. Bioclipse creates an index of the file and opens it in 66 seconds. It then continues parsing the properties in the background, which takes another 78 seconds. The MolTable editor was still very responsive and scrolls nicely. Not bad for such a large file!

Calculating InChI on the >1Gb file on the open file in MoleculesTable (resulting in all InChI properties kept in memory) took 13.20 min. Trying to save the resulting file took 2 min 49 seconds for the first 20Mb, extrapolated to 2h and 20 minutes for the total (this forces a complete save of all chemical structures and a lot of swapping in and out from disc). Calculating the same InChi and saving to file but not opening it in MolTable first (avoiding keeping all properties in memory) took 20 minutes. What do we learn from this? Browse large files is fine, but if you want to manipulate them then, do this on the file directly without visual inspection.

As a side note: Handling large SDFiles is generally not a recommended solution. When StructureDB (a relational database for chemistry) is released for Bioclipse, we will see a dramatic performance boost when dealing with large collections of molecules.

2009-07-03

Subscribe to:

Post Comments (Atom)

I guess your are not using EMF?

ReplyDeleteWe also have large data files. Unfortunatley they are in XML and this makes loading very time consuming. However, with CDO we will also see better loading times.

Henke, it is a custom application using indexing in the back. The current file being read is not XML, but we soon want to move there... the files we need to handle are in the GigaByte range, and some DOM is not possible...

ReplyDeleteI have yet no clue on how to make this fast, but just asked around:

http://friendfeed.com/the-life-scientists/78c211d6/dear-lazyweb-does-anyone-know-of-some-good